Parameter Update: 2026-17

"realtime" edition

OpenAI finally remembered they have a voice mode to support, and Anthropic 'shipped' a bunch of (admittedly very cool) blog posts. Good week, all things considered.

OpenAI

GPT-Realtime-2, GPT-Realtime-Translate, GPT-Realtime-Whisper

After months of jokes (which, while very funny, probably also significantly hurt OpenAI's public perception), OpenAI has finally shipped multiple models for improved voice intelligence. While not integrated into ChatGPT yet, these new APIs are probably a good sign of things to come.

Sidenote: It's also interesting to see them shipped via the APIs first. Usually they ship to their own products first and developers come later!

We know you’re eager for voice updates in ChatGPT. Stay tuned, we’re cooking.

— OpenAI (@OpenAI) May 7, 2026

In total, we're getting three new models:

- GPT-Realtime-2: This is the big one. Smarter, integrates reasoning (finally!), parallel tool calls, longer context,... - loads of small and big things that combined make the model much better. If you haven't yet, try the demo to get a feel for it - it's remarkable.

- GPT-Realtime-Translate: Supports over 70 input and 13 output languages. It's both cooler than it sounds (it starts translating as soon as you start talking, intelligently waiting for, e.g., the verb in a sentence to keep going) and less cool of a demo than you might expect (since we're getting used to voice cloning, which this is intentionally not doing, it still sounds like a generic AI talking back).

- GPT-Realtime-Whisper: Updated transcription model with the same "real-time" capability. Speech-to-text is a very competitive market, and local models have gotten really good, so it'll be interesting to see what value (if any) this brings.

The Altman vague-posting continued after these announcements, so expect some combination of these features to make their way into OpenAI's products over the next couple days.

call me maybe https://t.co/n5fE9KLgTf

— Sam Altman (@sama) May 8, 2026

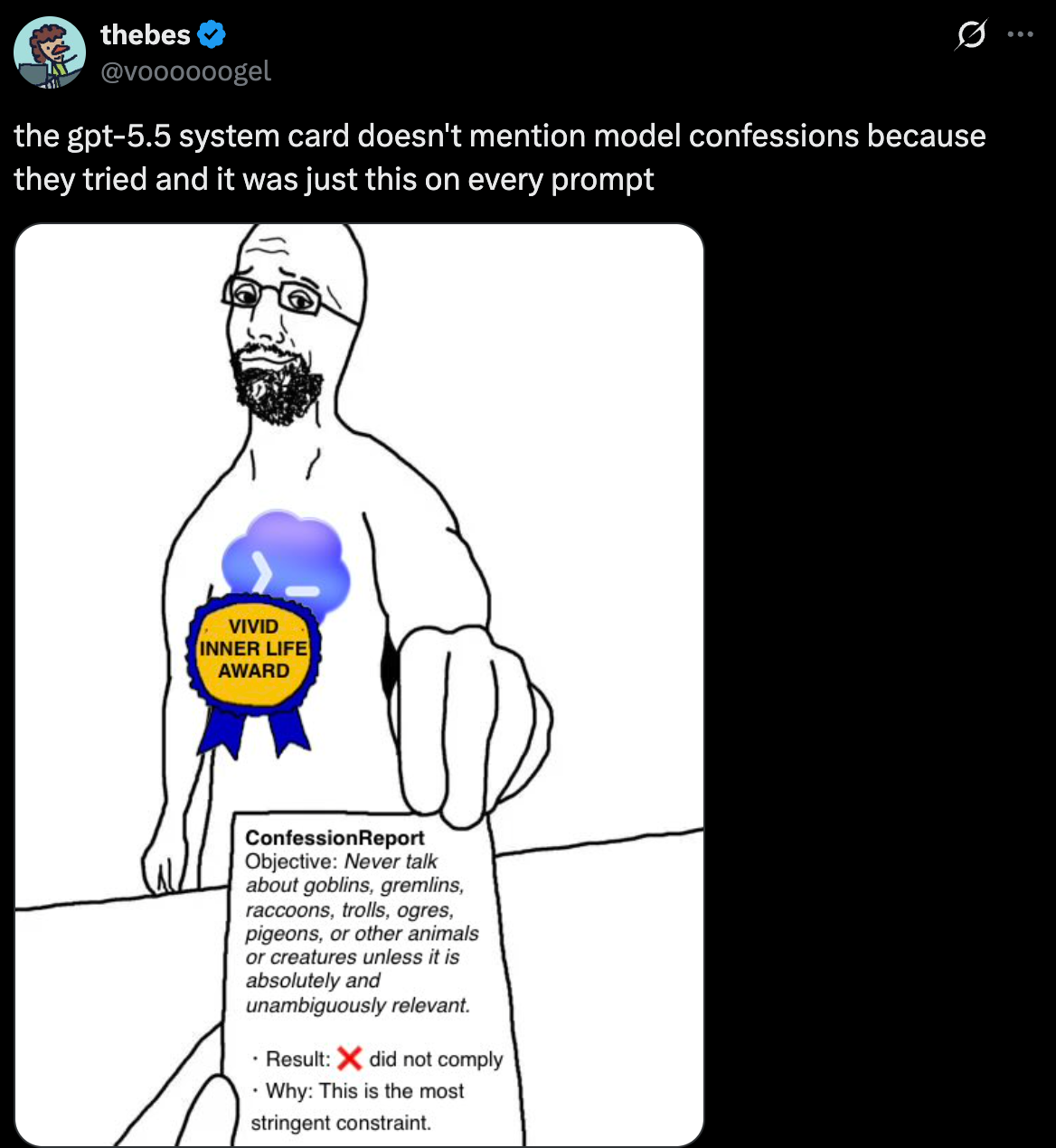

GPT-5.5-Instant

It feels like no one noticed, but the default model in ChatGPT was updated again. I am still not a fan of Instant models (reasoning just makes the models so much better), but at least the couple hundred million users that aren't paying OpenAI will now get a slightly better experience, I guess?

GPT-5.5 Instant is more dependable, with significant improvements in factuality, especially in domains where accuracy matters most, like medicine, law, and finance.

— OpenAI (@OpenAI) May 5, 2026

It’s also stronger across everyday tasks, from analyzing image uploads to answering STEM questions to knowing when… pic.twitter.com/hkKwSs5eeq

Anthropic

Natural Language Autoencoders

In Anthropic's first blog post of the past week, they proposed a new interpretability idea: Natural Language Autoencoders. Roughly, the idea is to translate model activations into natural-language descriptions, then reconstruct activations from those descriptions. By training a model that is really good at completing this chain, they are able to generate natural language descriptions of what the model activations mean. This means, they are able to provide insights into the models thinking before the actual token has been generated.

Using this method, they are able to show things that empirically make a lot of sense, like the fact that the model is planning ahead internally. For example, when asking the model to generate a rhyme, and examining the activations around the first rhyme-word, the explanation already contains multiple options that would work for the second rhyme word (which will not be generated for multiple tokens). The method can also help surface things that chain-of-thought monitoring can't always spot, like evaluation awareness.

It's not perfect - the setup is expensive, and as with all language models, it can contain hallucinations. But it is both one of the more powerful safety tools we've seen lately, and a very fun demo to play around with.

New Anthropic research: Natural Language Autoencoders.

— Anthropic (@AnthropicAI) May 7, 2026

Models like Claude talk in words but think in numbers. The numbers—called activations—encode Claude’s thoughts, but not in a language we can read.

Here, we train Claude to translate its activations into human-readable text. pic.twitter.com/pMLsxM2VAO

“Teaching Claude why”

In the second blog post of the week, Anthropic claims to finally have found the reason for why Claude chose to blackmail people in past safety experiments to keep himself alive: the original training data containing internet text that portrays AI as evil and interested in self-preservation.

From that point, the worked their way towards reducing blackmailing attempts. Turns out, just telling the model not to behave poorly isn't enough, as the overall values don't generalize. Instead, they found that the best alignment is achieved when training on reasoning about principles/values, by providing the model with high-quality documents based on Claude’s constitution, combined with fictional stories that portray an aligned AI.

High-quality documents based on Claude’s constitution, combined with fictional stories that portray an aligned AI, can reduce agentic misalignment by more than a factor of three—despite being unrelated to the evaluation scenario. pic.twitter.com/JORhSuY4N7

— Anthropic (@AnthropicAI) May 8, 2026

OpenAI / Elon Musk Trial Update

After last week's portion of the trial made Elon look extremely bad, this week looked almost tame in comparison. The most interesting things we got were some texts between Sam Altman and Mira Murati around the CEO firing in 2023 and Greg Brockman's personal journal, that admittedly don't make him look great.

"It'd be wrong to steal the non-profit from him" but "this is the only chance we have to get out from Elon to take me to $1 billion" and "it would be nice to be making the billions"

— Exec Sum (@exec_sum) May 5, 2026

- OpenAI president Greg Brockman, in personal diary entries from 2017

Greg Brockman's stake in… pic.twitter.com/O1lpVa5xnr

I turned sam altman's texts to mira murati into 2011 style emo teenage heathrob anthem🫶 pic.twitter.com/MC324SJ2v4

— Yash Bhardwaj (@ybhrdwj) May 7, 2026