Parameter Update: 2026-16

"goblin" edition

Much quiter week than I expected. Even the Elon/OpenAI trial was a bit boring. Huge week for goblin enthusiast, though.

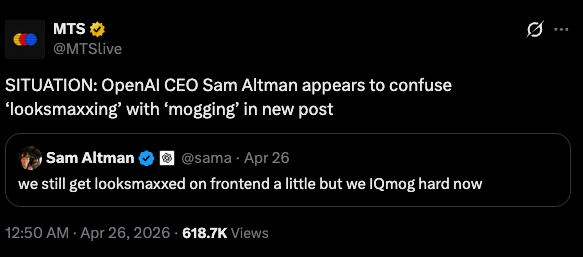

OpenAI

Elon Trial

My timeline has been surprisingly quiet about the first couple days of the Elon/OpenAI trial over the past week - but, looking at prediction market odds, it might be because Elon isn't doing too well. Anyway, I've mostly been following The Verge's coverage of the trial, and it does include some gems (even though there was a whole lot of "what are we doing here" to be found):

- They struggled to put together a jury that would be impartial about Musk. His lawyers kept objecting to jurors until the judge ruled "The reality is that people don’t like him… Many people don’t like him, but that doesn’t mean that Americans nevertheless can’t have integrity for the judicial process" (lol)

- Musk email Greg Brockman to discuss a settlement. Greg noted they could each drop the lawsuits against the other, to which Elon replied "By the end of the week, you and Sam will be the most hated men in America. If you insist, so be it."

- Elon confirmed xAI had in the past used model distillation to train their own models.

The case will continue this week, with (from my understanding) cross-examination of Altman coming up - so we're bound to be getting some more info soon.

GPT-5.5-Cyber

This announcement seems to have slipped under most people's radar, but OpenAI has updated their "-Cyber" model series (they shipped GPT-5.4-Cyber before) with another model. Once again, they aren't sharing any benchmarks publicly, but it's hard to imagine this one isn't close to Mythos on cyberattack capabilities specifically, given GPT-5.5 was already getting there in some benchmarks.

Not a huge fan of these "we trained a dangerous model but won't give anyone access or provide public benchmarks" releases lately - really feels like we're getting the short end of the stick.

we're starting rollout of GPT-5.5-Cyber, a frontier cybersecurity model, to critical cyber defenders in the next few days.

— Sam Altman (@sama) April 30, 2026

we will work with the entire ecosystem and the government to figure out trusted access for cyber; we want to rapidly help secure companies/infrastructure.

Goblins

In a slighly more fun piece of news: Someone realized the new Codex system prompt reminded the model not to talk about "goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query" - not just once, but twice!

— Eric W. Tramel (@fujikanaeda) April 28, 2026

This prompt leak turned into a very lively discussion, that ended up forcing OpenAI to comment on it themselves:

We’re talking about Goblins. https://t.co/dqmcLGCW71

— OpenAI (@OpenAI) April 30, 2026

The blog post remains very vague, but apparently the whole thing is a post-training artifact stemming from the "nerdy" personality they introduced (and then retired) a while ago. The blog post features a couple hilarious graphs showing 'Prevalence of “goblin” or “gremlin” over time'. It also includes a command to modify the Codex system prompt to remove the instruction not to talk about goblins - if you want to let the model be itself.

Anyway - I ran the command and, a few days later, tried out Codex' new "pet" feature, telling the model to design whatever pet it would like for itself. Happy to report I am stuck with 'Glim' now

Mistral Medium 3.5

Mistral is still training big models!

Well, big-ish. At least we're getting a new frontier model release from them. It's not state-of-the-art, with performance landing around Claude Sonnet 4.5, and context limited to 256K tokens, but at least it's open, with the release being under a modified MIT license.

Mistral Medium 3.5, a new flagship model in public preview by @MistralAI that merges instruction-following, reasoning, and coding into a single 128B dense model with a 256k context window and configurable reasoning effort. It's a new default model for Mistral Vibe and Le Chat.… pic.twitter.com/vcW1GaB3s1

— Mistral Vibe (@mistralvibe) April 29, 2026

My timeline made a big deal about the fact the model older architecture (dense + 256B context), as basically everyone is training MoEs these days, and the much higher API pricing compared to DeepSeek V4 (which has gained a lot of popularity lately, as it's almost free through their API). Personally, I am more concerned with the former (having a European frontier lab actually train state-of-the-art models would be good) than the latter (it's an open model, if you're concerned about prices just self-host). I will also remind everyone that LLMs, especially Chinese open source models, are often overfit on specific benchmarks (there is probably some cope here, as I would really like this model to be good, but yelling "model x scores better in benchmark y" feels semi-productive).

new mistral model: 128B dense with an arch from 3 years ago (llama 2), very low context (128k), priced higher than deepseek v4 pro (1.6T total params, 1M context) and every other oss model that outperforms it

— elie (@eliebakouch) April 29, 2026

this is very sad https://t.co/l6WdElXq1D pic.twitter.com/IwRlgnHHov

Talkie

Almost certainly not the most important piece of news, but definitely one of the coolest things I have seen in a while: someone trained a language model on only (to the best of their abilities) pre-1931 text. At 13B it's not a huge model, but much larger than these types of experiments usually get. The coolest thing: you can talk to it in your browser, right here.

Announcing Talkie: a new, open-weight historical LLM! We trained and finetuned a 13B model on a newly-curated dataset of only pre-1930 data. Try it below!

— David Duvenaud (@DavidDuvenaud) April 27, 2026

with @AlecRad and @status_effects 🧵 pic.twitter.com/kThUESG13e

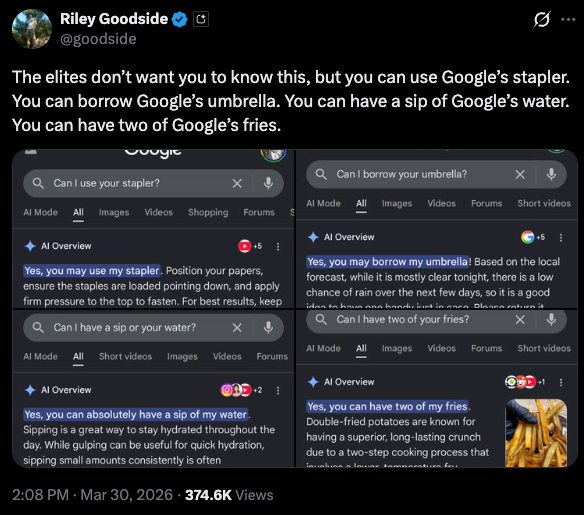

Exa x Google

No real analysis on this at all, just something slightly bizarre: Google has partnered with Exa to provide search grounding for Gemini? Great job, Exa sales people! (but why would Google partner with another search engine?)

We're excited to partner with Google to offer Grounding With Exa inside of Gemini models!

— Exa (@ExaAILabs) April 28, 2026

Using Exa's agent-first search, Gemini models can now access billions of websites, technical docs, papers, people, companies, and more.

10^18🤝10^100 pic.twitter.com/1J11T1KzO7