Parameter Update: 2026-14

"cyber-warfare-over-refusal" edition

Last couple weeks have been unusally busy, so it's good to see some return to normalcy now. That being said, I do wonder what's going on at Anthropic, and we're expecting a bigger OpenAI model launch very soon.

Anthropic

Claude Opus 4.7

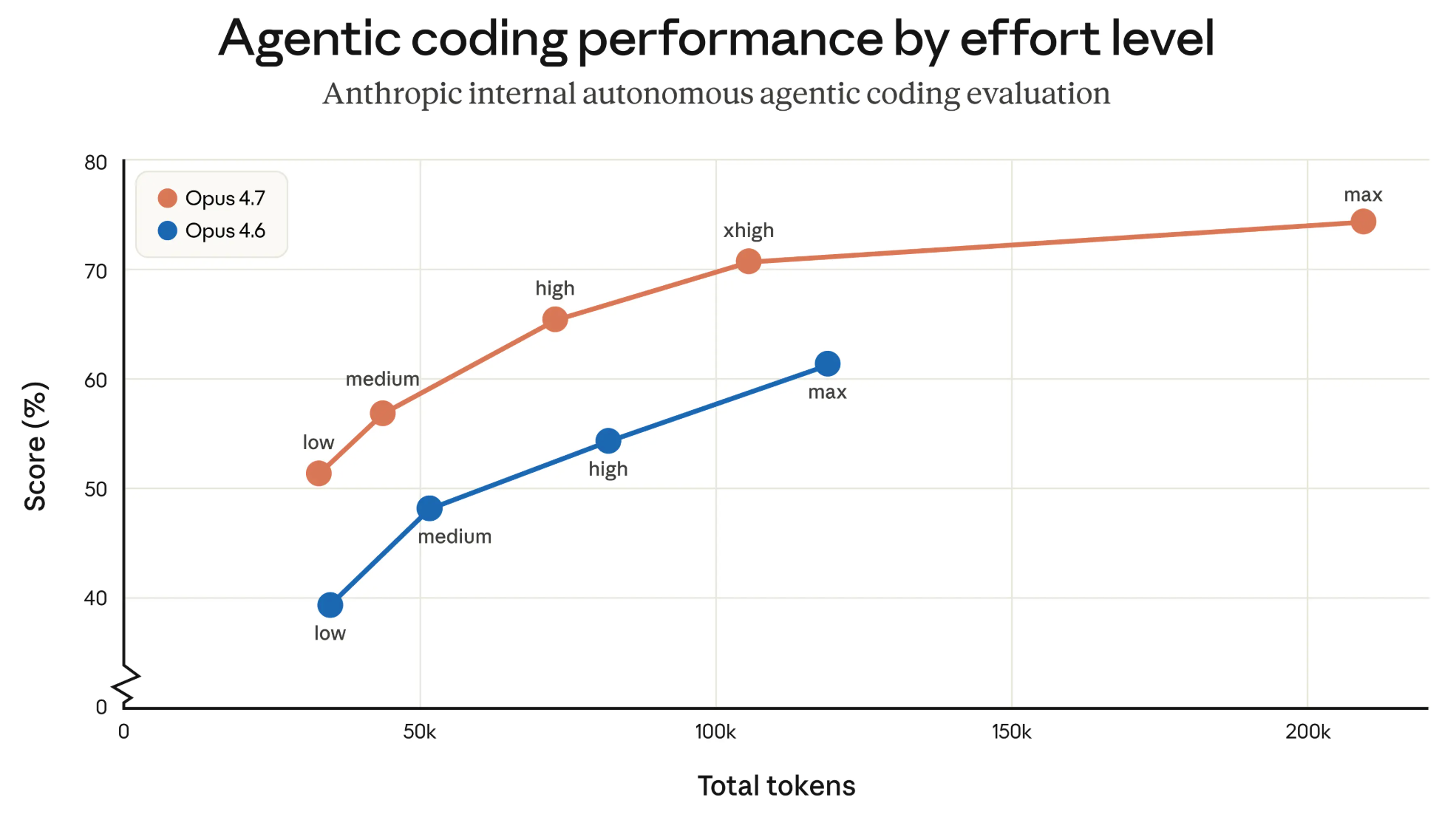

Two weeks after announcing the "machine god" that is Claude Mythos (if you trust the hype), Anthropic announced a surprisingly boring model update - a minor version bump to Opus. Looking at benchmarks it seems like a small-but-notable improvement.

Introducing Claude Opus 4.7, our most capable Opus model yet.

— Claude (@claudeai) April 16, 2026

It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back.

You can hand off your hardest work with less supervision. pic.twitter.com/PtlRdpQcG5

On internal benchmarks, it matches Opus 4.6 at much better token efficiency:

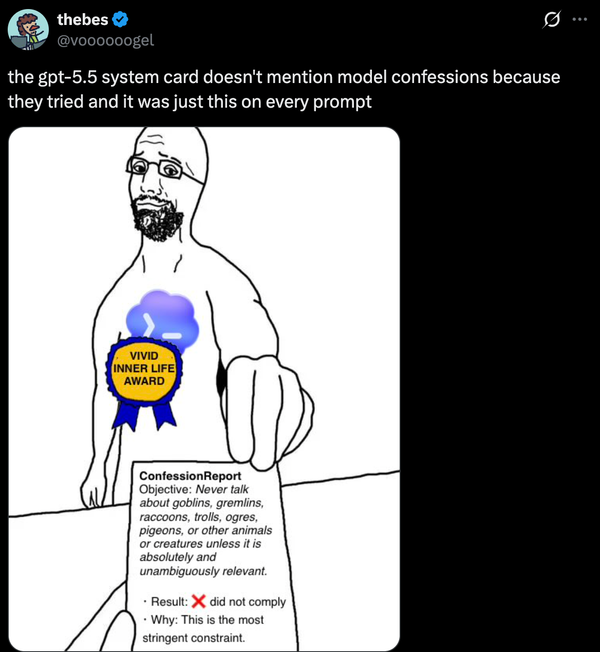

The real story appears to be a bit more nuanced, though. At least on my timeline, people have consistently complained about the over-refusals and inconsistent results:

anthropic has lost the divine mandate.

— ben (is hiring engineers) (@benhylak) April 16, 2026

opus 4.7 is a bad model.

Oh my god Opus 4.7 really is awful. Claude decided to stop working on a project we've been making for years because it

— Lexer (@LexerLux) April 18, 2026

1. Suddenly became adamant it MUST refuse to make malware

2. Decided to spend ages scanning THE PROJECT IT MADE for malware

3. Found none

4. Refused anyway pic.twitter.com/dWERWxyF7w

Which appear to stem from them rolling out "safeguards that automatically detect and block requests that indicate prohibited or high-risk cybersecurity uses" to prepare for an eventual Mythos launch. And even the better token efficiency might not be all that useful, given the new tokenizer seems to use more tokens to encode the same text as before:

So basically 33% more tokens with the new Opus 4.7 tokenizer. That's one chonky API revenue increase, while keeping token prices "the same". pic.twitter.com/JjokYNhq5J

— Mario Zechner (@badlogicgames) April 17, 2026

and the model will use more tokens on higher reasoning settings. This fumble of a launch follows rumors of Anthropic launching their own vibe coding platform, their own Design application, and consistent complaints about the new Claude Desktop app. I've personally experienced some of these issues and it really seems like the Anthropic team is both distracted and compute-constraint right now - while still training the best model out there. Add the ongoing DOD drama, and it seems like a very interesting time for the company.

OpenAI

Codex Improvements

The Codex app go a number of updates this week, turning it into more of a "general purpose productivity tool" than pure developer tooling. The app can now

- Interact with any application of your Mac (in the background, without it taking focus!)

- Generate images using gpt-image-1.5 and automatically include them in projects

- Run automations in existing threads

They also added 90+ new 'Plugins' (these appear to just be Skills?) for existing apps like Microsoft Teams, Google Calendar, Attio, Binance,...

Codex for (almost) everything.

— OpenAI (@OpenAI) April 16, 2026

It can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks. pic.twitter.com/UEEsYBDYfo

GPT-Rosalind

While Anthropic positioned Mythos' cyberattack capabilities as mostly 'emergent' and a side effect of overall scaling, OpenAI actively announced this week they intentionally tuned a new model in a higher risk domain - biological reasoning and drug discovery. Similarly to Anthropic, OpenAI is limiting access to selected partners for now. Going one step further, they didn't even provide a full system card or benchmarks (except for one barely useful graph) - boo!

GPT-Rosalind, our Life Sciences model series, is optimized for scientific workflows, with stronger performance in protein and chemical reasoning, genomics analysis, biochemistry knowledge, and scientific tool use. pic.twitter.com/PTdbHMgR50

— OpenAI (@OpenAI) April 16, 2026

Gemini Robotics ER 1.6

While Anthropic and OpenAI are advancing cyber-attack capabilities and biological warfare respectively, DeepMind is focussed on embodied AI in the form of visual and spatial reasoning with their Gemini Robotics ER 1.6 model. Most of the performance improvements seem like small-but-significant upgrades from the Gemini 3.0 Flash base model, so the most interesting thing here is (1) the fact they highlight specific functionality like instrument reading, (2) their ongoing collaboration with Boston Dynamics (even after they sold them in 2017), and the fact this model exists at all (you wouldn't bother fine-tuning a model for robotic reasoning tasks unless you had some reason for it to exist, and Google keeps doing it).

Introducing Gemini Robotics ER 1.6, our new SOTA robotics model 🤖 which excels at visual and spacial reasoning, now available via the Gemini API! pic.twitter.com/orAoslp4Zu

— Logan Kilpatrick (@OfficialLoganK) April 14, 2026