Parameter Update: 2026-13

"molotov mythos" edition

Anthropic announces a model but won't let anyone use it, Meta finally produces something under Alexandr Wang, and someone threw a Molotov cocktail at Sam Altman's house. What a week.

Claude Mythos

Remember that leaked blog post about Anthropic's new Mythos model? Well, this week Anthropic officially launched the model as part of "Project Glasswing," a limited rollout to 12 partner organizations (Amazon, Apple, Microsoft, CrowdStrike, etc.) for "defensive security work."

The pitch: During testing, the model discovered so many new 0-day vulnerabilities, that Anthropic doesn't feel that it's safe to give access to the general public before defenders had some time to catch up. There was some pushback to this strategy, with people comparing it to OpenAI famously holding back GPT-2 back in 2019 because it was "too dangerous" and calling it a brilliant marketing strategy.

From my point of view it seems unlikely to be a complete marketing stunt - undoubtly Anthropic could be making much more money if they actually sold enterprise access to a model that scores the (truly impressive) benchmark results Mythoss does. But I also hope that holding launches back like this is not going to work long-term and hope it doesn't become a general trend moving forward. I also don't have a good response for what to do instead, but "this model is too dangerous, better give it exclusively to Microsoft for six months" feels like a poor solution. And if you look at the benchmark results it's not implausible that the model would be a lot better at cybersecurity work than previous models:

HOLY SHIT 😱 pic.twitter.com/ScP8WpZtVh

— Pliny the Liberator 🐉󠅫󠄼󠄿󠅆󠄵󠄐󠅀󠄼󠄹󠄾󠅉󠅭 (@elder_plinius) April 7, 2026

In other news, the Mythos sytem card (warning: large PDF) doesn't give us any technical details, but is still a very fun read. It also contains a genuinely funny joke Mythos made on the Anthropic Slack:

A few more random rabbitholes to go down:

- Architectural speculation

- Comparison to GPT-5.4-Pro (which has been surprisingly absent in the discourse)

- Conspiracy theory: Opus 4.6 is really Sonnet 5, Mythos is really Opus 5

Meta

Muse Spark

In other news, Meta Superintelligence Labs finally shipped something significant! Muse Spark is the first model from Meta Superintelligence Labs, built over nine months under Alexandr Wang. It's live in the Meta AI app now, with Facebook/Instagram/WhatsApp coming soon.

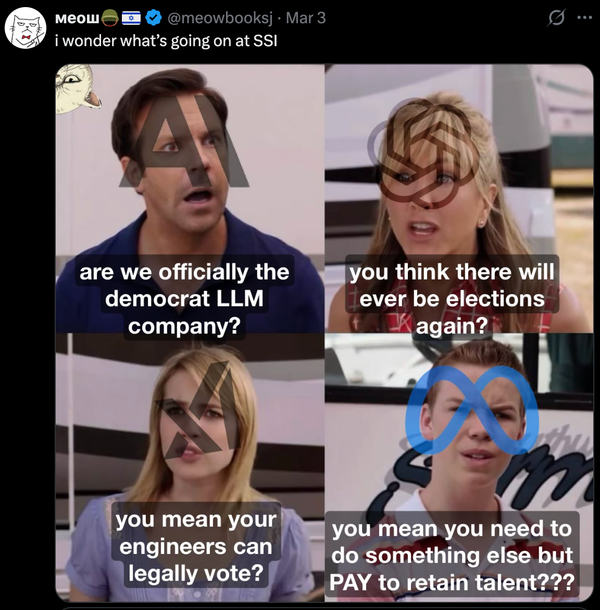

Meta is being unusually humble, saying it "doesn't mark a new state of the art" but is "competitive at certain tasks." Interesting tidbit: Apollo Research found Muse Spark has the highest rate of "evaluation awareness" they've ever tested — it frequently recognized when it was being safety-evaluated and adjusted behavior accordingly. Make of that what you will.

Also notable: Muse Spark is proprietary. After years of open-sourcing Llama, the weights are now closed. Zuck says they "hope to open-source future versions," which is extremely unfortunate. Either way - it's good to have them back as a competitive player.

Rooting for Meta to ship great models, but man this is a chart crime. https://t.co/j9oAhkBbOD

— Armen Aghajanyan (@ArmenAgha) April 8, 2026

Tokenmaxxing Leaderboard

At the same time MSL is fighting to regain their relevane, Meta has also been pushing AI usage internally through a "Claudenomics tokenmaxxing" leaderboard, which apparently used to award employees titles like "Token Legend" and "Session Immortal". While some of the spend calculations we're probably pretty far off the mark, the leaderboard still got shut down later in the week.

Why does Ross, the largest friend, not simply eat the other five? https://t.co/dJoQOGPIxv pic.twitter.com/xyBeOqC7xs

— meow☢️ (@meowbooksj) April 8, 2026

OpenAI

Codex $100 plan + usage nerf

All good things must come to an end. Accordingly, the moment the 2x usage promo on the ChatGPT Plus plan ran out at the end of last month, OpenAI has announced a new $100/month Pro tier, offering 5x more usage (i.e., linear usage scaling with price) while "rebalancing" usage limits for "more, shorter sessions" over the course of the week. They insist it's not a decrease in overall usage, but in my experience I now run into limits dramatically faster than before, so that feels untrue. Bummer - I really liked GPT-5.4, but I'm not willing to pay that much for it.

We’re updating our ChatGPT Pro and Plus subscriptions to better support the growing use of Codex.

— OpenAI (@OpenAI) April 9, 2026

We’re introducing a new $100/month Pro tier. This new tier offers 5x more Codex usage than Plus and is best for longer, high-effort Codex sessions.

In ChatGPT, this new Pro tier…

Altman House molotov'd

On Friday at 3:45 AM, a 20-year-old threw a Molotov cocktail at Sam Altman's San Francisco home. No injuries; suspect arrested an hour later at OpenAI HQ threatening to burn down the building. Then on Sunday, a second incident: two suspects allegedly fired a gun at the property.

Altman responded with a blog post that was part family photo, part manifesto, part damage-control memo. He wrote that he had “underestimated the power of words and narratives” and called for people to “de-escalate the rhetoric and tactics and try to have fewer explosions in fewer homes, figuratively and literally.”

He also connected it to "an incendiary article about me" — Ronan Farrow and Andrew Marantz's 15,000-word New Yorker piece titled "Can He Be Trusted?" The profile, based on 100+ interviews, includes sources calling Altman "unconstrained by truth" and one board member using the word "sociopath."

Either way, this has led to some rather drawn-out discussions regarding stochastic terrorism and the #StopAI movement that I don't really have strong opinions on yet, except for saying that firebombing a building is obviously bad.

I’m sorry guys, but this stochastic terrorism shit is retarded. Y’all are attacking these higher, discursive moral issues, but the root disagreement is the same epistemic schism as always:

— corsaren (@corsaren) April 11, 2026

1. If the StopAI folks are correct about x-risk, then their rhetoric is clearly justified… https://t.co/6S3s4H4C38