Parameter Update: 2026-11

"big brain model" edition

I might be falling for the hype again, by genuinely excited about Mythos (hope it's not April fools?)

Gemini 3.1 Flash Live

It's been a minute since audio-to-audio models received a significant upgrade, so it's good to see that people are still working on them. Google's new model is faster, has better function calling, and a much longer context window. In my experience, the old Gemini Live model was usually outperformed by GPT-Realtime, so I am happy to see Google not just catching up by beating OpenAI by some margin.

Developers no longer have to choose between speed and reliability when building voice-first AI agents. Gemini 3.1 Flash is optimized for real-time dialogue at scale.

— Google (@Google) March 26, 2026

Key improvements:

⚡️ Faster response times

🗣️ More natural dialogue with even lower latency

🧠 Better… pic.twitter.com/59Ovt292kT

TurboQuant

Despite the original paper being released around a year ago, this week a new blog post catapulted TurboQuant into the our collective consciousness. The trick is relatively straightforward: Use PolarQuants to compress the KV cache of transformer models, leading to a ~6x memory reduction (for the cache specifically).

That by itself is cool, but probably wouldn't be newsworthy. What was though: This news "breaking" somehow lead to the first decline in memory prices in recent months, with Micron stock crashing around 15% over the past week (or maybe it was just the OpenAI stuff causing a drop in demand?).

A great example that medium shapes impact.

— Jia-Bin Huang (@jbhuang0604) March 26, 2026

A research paper on arXiv 11 months ago:

👉 2 citations so far

An accessible blog post one day ago:

👉 12 M views, instant community adoption https://t.co/tqFrtwdC3F

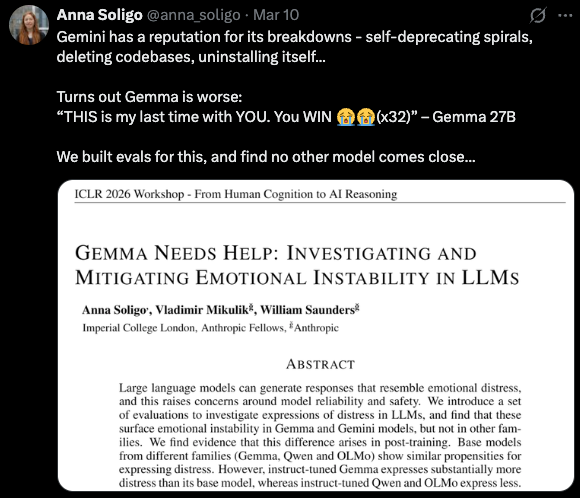

Anthropic: Claude Mythos / Capybara

A CMS misconfiguration on Anthropic's website leaked a draft for an announement of something called "Claude Mythos" or "Claude Capybara". Thankfully, someone was quick enough to save it before it was deleted, so we have actual quotes to pull. Accoding to the blog, Mythos is "by far the most powerful AI model" Anthropic has ever deployed and reached "dramatically higher scores on tests" compared to Claude Opus 4.6. It is also apparently "very expensive to serve" and will be "very expensive to (their) customers". Rollout will happen over the next few weeks, with cybersecurity professionals being given early access to assess to harden their systems before a wider launch. After the post was removed, Anthropic let the cat out of the bag officially, confirming to Fortune that the company was testing a "new model representing 'a step change' in AI performance".

We don't have any timeline on an official announcement or release date, but the blog post mentions wider availability over the next couple weeks. As expected, people on twitter are mostly busy discussing the naming

Naming their next model after Cthulhu makes it hard to take Anthropic seriously as the good guys. It's fun at any other software company, not one that actually is flirting with extinction. https://t.co/8AQz1z8YHL

— Eliezer Yudkowsky (@allTheYud) March 27, 2026

OpenAI: Sora Shutdown

In what is probably the most-discussed news of this week, OpenAI has announced it will shutdown the Sora modal and accompanying mobile app. This comes at the same time they are also putting other sidequests (like the NSFW-mode) on hold and are reportedly renaming an interal department to "AGI Deployment". There are a few ways to interpret this. Either, they are running out of cash faster than they expected and this is their way of increasing runway (people are even claiming large parts of Project Stargate might get canned). On the other hand, given the Mythos rumors above, they might also just have a big model they want to serve and this is their way of freeing up compute and personnel to do so.

Meta: Tribe v2

Meta released a new version of their TRIBE model, scaling the same core architecture to over 500 hours of fMRI data from over 700 people, massively improving generalization. The model is designed to predict brain responses to stimuli, so the corresponding demo is equal parts dystopian and cool: You give it a stimulus (picture, sound,...) and it tells you what it thinks the neural response might look like. People on Twitter are freaking out about the implications this will have on things like advertising, but don't seem to realize that fMRI has extremely limited temporal resolution (~1 second), significantly reducing the value of their predictions. Still extremely impressive, not trying to downplay the achievement in the slightest, but people pitching the "Meta is simulating our brains to improve ad targeting" angle are probably missing the point a little.

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound.

— AI at Meta (@AIatMeta) March 26, 2026

Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people… pic.twitter.com/vRoVj8gP4j