Parameter Update: 2026-10

"k2.5-v2-cursor" edition

Missed last week because I was in the UK getting my brain scanned (among other things), but that's mostly fine as we've mostly got a lot of smaller stories this time around. Also my twitter embeds broke, so enjoy the screenshots until I get around to fixing that I guess.

Gemini Embedding 2

Google has announced their new "Gemini Embedding 2" model, which aims to cover as many modalities as possible. This wouldn't really be all that notable, except this apparently includes PDFs? Also: It's really expensive for an embedding model?

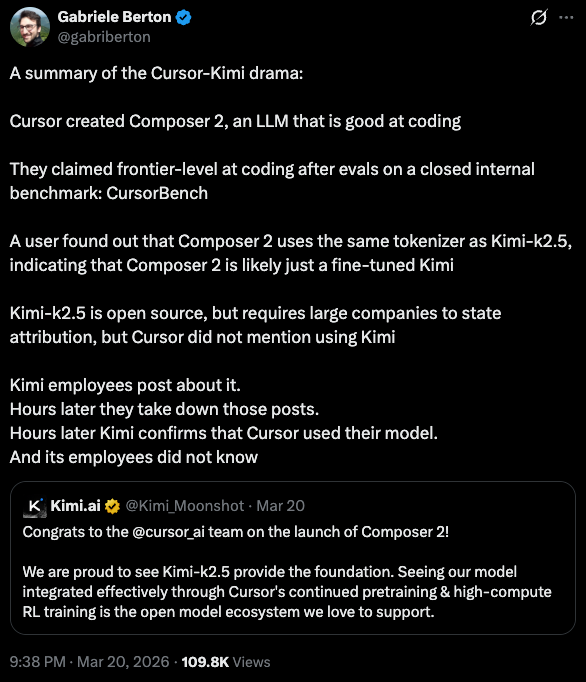

Cursor: Composer 2

Cursor has long struggled to become more than an API wrapper. One of the ways in which they are doing this is their own series of "Composer" models. This week, they announced "Composer 2", which is competitive in coding with most of the big labs. That would be really impressive, if people hadn't very quickly caught on to the fact that Composer 2 is really just a Kimi K2.5 fine-tune.

Financially, this actually makes a lot of sense - Cursor has a lot of great coding data, and a full pre-training run would be very wasteful for their narrow use case. On the other hand, it has been very funny seeing the explosion of backlash and eventual clarifications by the Cursor and Kimi teams that yes, Composer 2 is just K2.5 in a trenchcoat, but the model is still very good and licensing was handled before launch, so everything is fine, actually.

Yann LeCun: Advanced Machine Intelligence

Someone on Twitter once asked how much money Yann LeCun would be able to raise by himself for his own AI lab. Now we have a definitive answer: At least $1.03B! AMI Labs is a public bet against the current trend of LLMs, aiming to build "a new breed of AI systems that (1) understand the real world, (2) have persistent memory, (3) can reason and plan, (4) are controllable and safe."

Unveiling our new startup Advanced Machine Intelligence (AMI Labs).

— Yann LeCun (@ylecun) March 10, 2026

We just completed our seed round: $1.03B / 890M€, one the largest seeds ever, probably the largest for a European company.

We're hiring!

[the background image is the Veil Nebula - a picture I took from my… https://t.co/voyuA5nwiL

Nvidia: GTC 2026

DLSS 5 Drama

DLSS is one of these Nvidia technologies that gamers have been arguing about for a long while now. It can help run games with much better resolution and higher framerate by using AI to interpolate and upscale frames, which is really nice, but potentially also incentivices Nvidia to sell underpowered GPUs that screw with the artistic intent behind the games.

At GTC this week, Nvidia announced DLSS 5, due to arrive later this year, which will completely re-do faces and environmental details in some cases by running the entire scene through a real-time video AI model, running on a separate GPU. There has been a lot of backlash about it, which makes sense, but I am still wondering if Nvidia might just be able to pull off another stunt here - people also hated DLSS 1 when it came out and are absolutely willing to pay more for it now, so maybe let's hold out until the feature actually drops.

Nemotron Coalition

Also at GTC, Nvidia announced the "Nemotron Coalition", a group of companies that would, moving forward, "collaboratively build open frontier models". This is especially notable given it includes LangChain (which aren't really training any models?), Reflection AI (which to my understanding got acquihired a while ago), and Thinking Machines (which no one really knows what they are up to right now?). It remains to be seen if any of this turns out to be more than PR fluff, but that's certainly an interesting lineup.

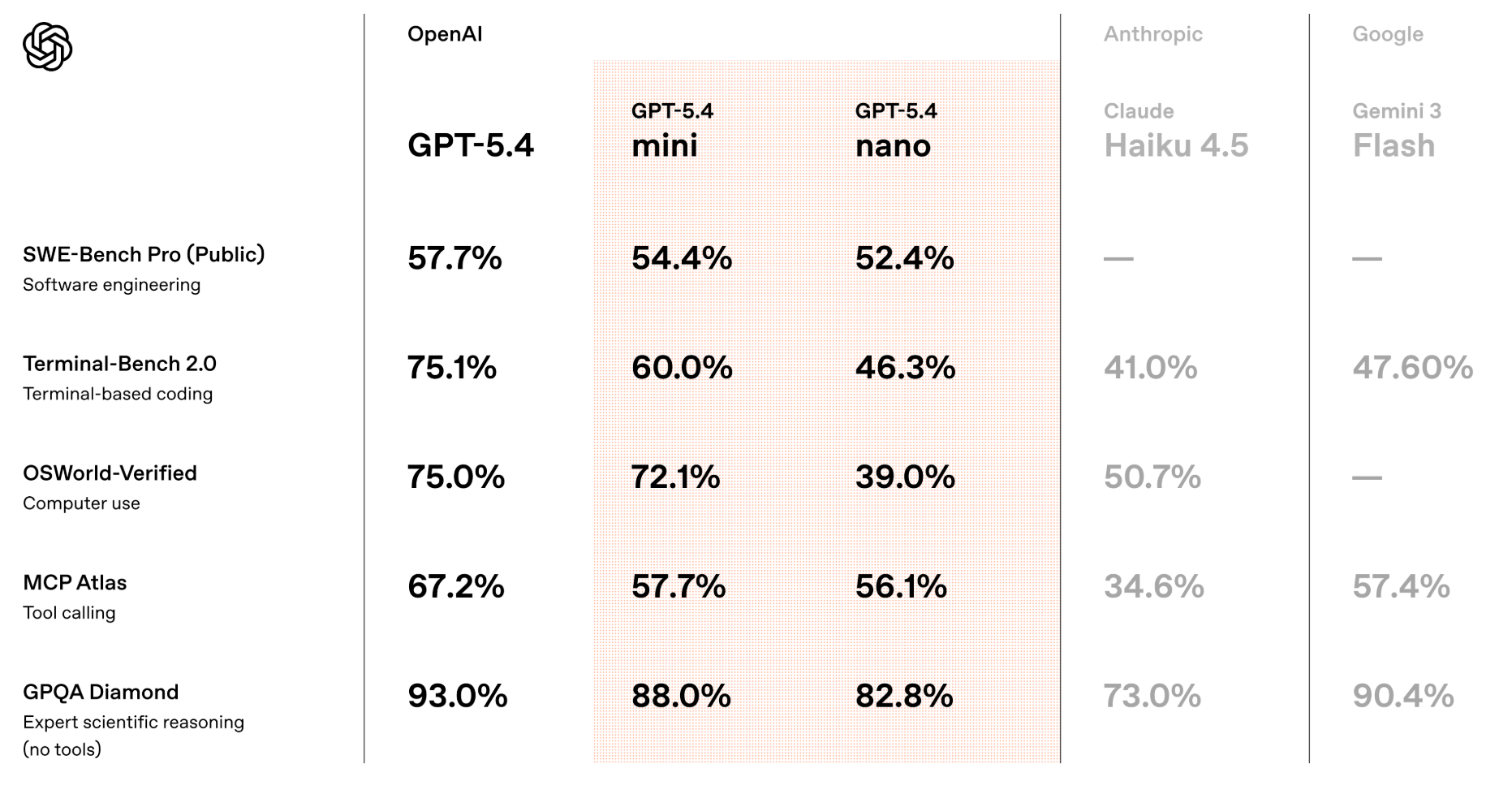

OpenAI launched GPT-5.4 mini and nano

After the regular models received updates over the last few week, it was about time for OpenAI to give the '-mini'-models some love. Seem like solid upgrades over the models each of these is replacing (and alsoo solidly better than the competition), but I remain skeptical about most production use cases for these models.