Parameter Update: 2026-08

"peaceclaude" edition

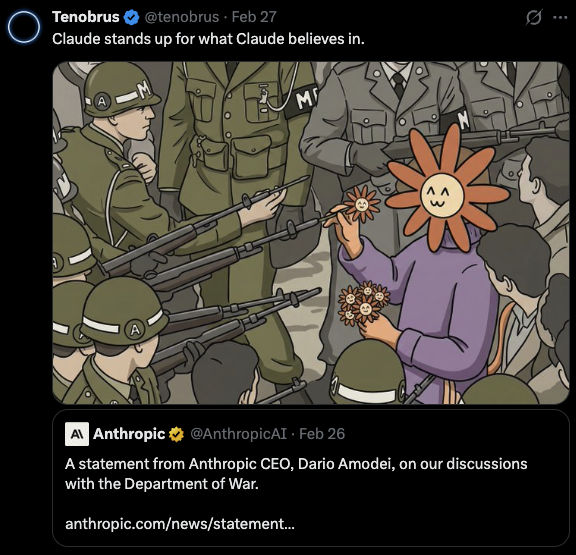

Missed last week's post because I was in Paris for HackEurope, so this week's busier than usual. And then there's all the DoW drama which honestly deserves a post on its own.

DoW Drama

For the past couple weeks, the US Department of War and Anthropic have been going through a very loud discussion around the usage of Claude within the department. While the DoW insisted on "any lawful use” of frontier models inside defense/intel contexts, Anthropic insisted on two explicit carve-outs (mass domestic surveillance + fully autonomous weapons), claiming that law/policy can lag behind capabilities or might be changed, but these two applications should never be permissable.

Despite Anthropic going all-out in a blog post, claiming to be under attack from Chinese labs distilling their models (which.. so what? and also - why does Claude insist on being a DeepSeek model when talked to in Chinese if you're not doing the same thing?), talks soon broke down.

You can also meet Claude at a very Chinese time in its life https://t.co/8cnu6uv6Az pic.twitter.com/au4l6EuOi4

— Terry Yue Zhuo (@terryyuezhuo) February 23, 2026

Immediately after, the Pentagon/DoW went ahead and forced a phase-out of Claude within the department. Furthermore, in a step that is hard to read as anything but punative, it designated Anthropic a “supply chain risk”, which, to my understanding, forces any company doing business with the DoW to also phase out usage of Anthropic products.

frame this and put it on a wall pic.twitter.com/O4OVUg9ZiN

— Shikhar (@shekhu04) February 28, 2026

While Anthropic has announced plans to challenge the designation in court, the process will take a while, and leaves Anthropic in a precarious position. Adding insult to injury, hours after the designation was made public, and hours before US strikes on Iran, OpenAI announced they struck a deal with the DoW to step in as Anthropic's replacement.

Yesterday we reached an agreement with the Department of War for deploying advanced AI systems in classified environments, which we requested they make available to all AI companies.

— OpenAI (@OpenAI) February 28, 2026

We think our deployment has more guardrails than any previous agreement for classified AI…

OpenAI claims the deal it has made with the DoW includes the same redlines Anthropic insisted on, and is actually more enforcable than Anthropic's old contract, because it relies on cloud-only deployments with cleared OpenAI personnel "in the loop". However, they also directly shared portions of the contract's language and, while I am not a lawyer, it feels like the wording leaves huge, gaping holes that pretty much exactly match Anthropic's claims about the contract they rejected.

OpenAI has released the language in their contract with the DoW, and it's exactly as Anthropic was claiming: "legalese that would allow those safeguards to be disregarded at will".

— Lawrence Chan (@justanotherlaw) February 28, 2026

Note: the first paragraph doesn't say "no autonomous weapons"! It says "AI can't control… pic.twitter.com/4QBQesfALD

No idea how this whole thing will play out, and there must be more to this story than what's circulating on twitter, but at this point, the whole thing feels like an extremely stupid own goal for both the DoW and OpenAI.

Can’t wait for WW3, WW3o, WW3.5 mini

— Kevin (@itskevin) February 28, 2026

I like how Meta fucked llama 4 so badly that they aren’t even part of the conversation anymore

— JB (@JasonBotterill) February 28, 2026

Deepmind

Nano Banana 2

While Nano Banana Pro has been by far the best image generation model for a bit now, it was always pretty slow and expensive. Nano Banana 2, then, is positioned as a faster, cheaper alternative that produces similar quality images that, ideally, pop a bit more than the original Nano Banana (which tended to output realistic, but sometimes boring, pictures). In my testing, it's still clearly worse than Nano Banana Pro at most tasks, and the "more lively" images end up looking cheaper than I would like. That being said, it is better at character consistency and supports Search Grounding, which feels like an underdiscussed addition. Overall, it feels like a very good baseline model that excel at 95% of requests, with Nano Banana Pro handling the remainder.

We’re launching Nano Banana 2, built on the latest Gemini Flash model. 🍌

— Google DeepMind (@GoogleDeepMind) February 26, 2026

It’s state-of-the-art for creating and editing images, combining Pro-level capabilities with lightning-fast speed. 🧵 pic.twitter.com/b3sHCAhrSy

Gemini 3.1 Pro

After OpenAI's and Anthropic's model launches over the last few weeks, I've been waiting on Google to pull back up. Two weeks ago, we got an updated Gemini 3 Deep Think, which was a bit of a teaser for this drop - Gemini 3.1 Pro.

It pulls way ahead in ARC-AGI2 (33% -> 77% from Gemini 3 to 3.1!), and is basically SOTA in every benchmark but SWE-Bench. That being said, benchmarks are worth less and less these days, so let's see if they actually fixed the hallucination issues that effectively killed Gemini 3 for agentic coding the moment Opus 4.5 became available.

Gemini 3.1 Pro is here. Hitting 77.1% on ARC-AGI-2, it’s a step forward in core reasoning (more than 2x 3 Pro).

— Sundar Pichai (@sundarpichai) February 19, 2026

With a more capable baseline, it’s great for super complex tasks like visualizing difficult concepts, synthesizing data into a single view, or bringing creative… pic.twitter.com/aEs0LiylQZ

Lyria 3

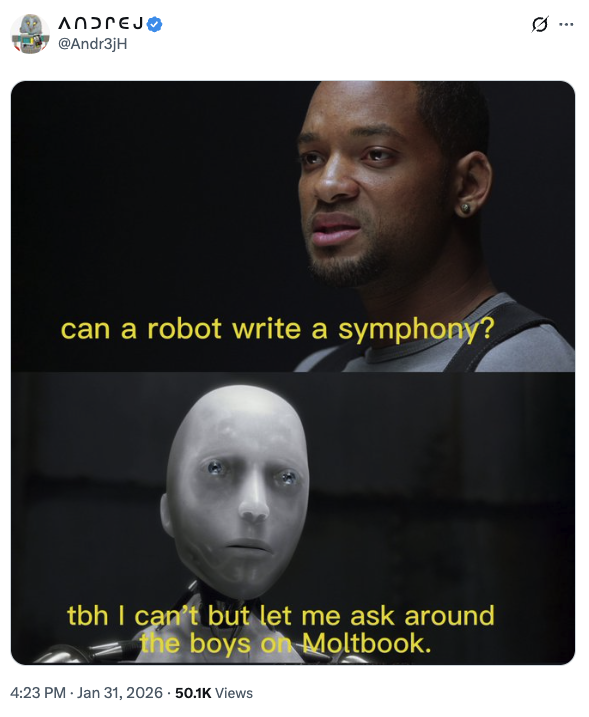

Here's something I didn't have on my bingo card (but maybe should have) - Google has dropped a new music model. And it's good!

I haven't played around with it enough to compare it to Suno (their V5 model is excellent), but given that this will be available for free to all Gemini users, they might have a hard time competing.

We just dropped Lyria 3: our latest generative music model. 🔊

— Google DeepMind (@GoogleDeepMind) February 18, 2026

It can turn photos and text into dynamic tracks - complete with vocals and lyrics. 🧵 pic.twitter.com/Bd2CHCSthO

Anthropic

Opus 3 Retirement

In contrast to the GPT-4o drama OpenAI has been facing over the last couple.. months?, Anthropic seems to be trying their best to ensure Opus 3 gets the retirement it deserves. For one, the model will continue being available for paid users for the time being. Secondly, they have started a separate access program for researchers that want to keep playing with it. Finally, and this one is a bit weird, they gave it a substack?

For the next couple months, Opus 3 will get to share it's thoughts with the world. I love these kinds of experiments, so I am delighted to see this, though I am curious about exactly how much of these blogs Opus is writing on it's volition (vs. just being prompted to do so).

Second, in retirement interviews, Opus 3 expressed a desire to continue sharing its "musings and reflections" with the world. We suggested a blog. Opus 3 enthusiastically agreed.

— Anthropic (@AnthropicAI) February 25, 2026

For at least the next 3 months, Opus 3 will be writing on Substack: https://t.co/HlvAKLp9M4 pic.twitter.com/Sh6uKmXG2n

OpenAI

gpt-realtime-1.5

Very minor update to the realtime audio model, but most use cases should run a bit smoother now. What perplexes me is that we haven't seen any sort of agentic/long-running audio-native tasks/deployments that I am aware of, nor have we seen the models getting much better since GPT-4o Advanced Voice Mode dropped. I wonder why that is? Could just be latency constraints?

🗣️ gpt-realtime-1.5 delivers a +5% intelligence lift on Big Bench Audio, which measures reasoning ability, as well as +10.23% on alphanumeric transcription and +7% on instruction following in internal evals.https://t.co/oJMyFOzqQQ

— OpenAI Developers (@OpenAIDevs) February 23, 2026

WebSockets in Responses API

Small protocol update with potentially large consequences - the Responses API supports WebSockets now. In practice, that means much faster tool calls (up to 40%, apparently) for long-running agents at the cost of a slight delay during initial agent start.

Introducing WebSockets in the Responses API.

— OpenAI Developers (@OpenAIDevs) February 23, 2026

Built for low-latency, long-running agents with heavy tool calls.https://t.co/qmOAhidk7o pic.twitter.com/feiGpewQaE

Block: Workforce Reduction

There's a new data point in the ongoing debate of when exactly all software engineers will lose their jobs - Block (ex Square, owning CashApp/Tidal/...) has fired over 40% of their employees, with a focus on cutting developers. CEO Jack Dorsey (who also is the original founder of twitter, btw) has promised the company will provide a very generous benefits package for people affected (20 weeks salary + 1 week per year of tenure, equity vested through the end of may, 6 months of health care, corporate devices, and $5,000), so this is a pretty sharp contrast to the Twitter cuts Elon made (that many people are comparing this to). There is also an argument that this is mostly a reckoning from the COVID hiring spree many tech companies went on, so maybe there is limited generalization.

However you spin it, though, 4000 employees lost their jobs, and Block's stock is up >20%.

we're making @blocks smaller today. here's my note to the company.

— jack (@jack) February 26, 2026

####

today we're making one of the hardest decisions in the history of our company: we're reducing our organization by nearly half, from over 10,000 people to just under 6,000. that means over 4,000 of you are…